There are a million reasons why you would want to monitor the Battery voltage of your Battery fed ESP8266. I will illustrate it with a Wemos D1 mini and the Battery shield

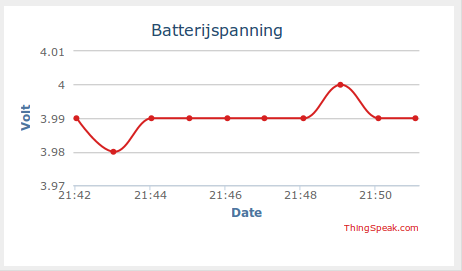

I am using a small 720 mAh LiPo cel. If I just leave the Wemos access the internet continuously it will last 6.5 hours, but for this example I will put the Wemos in Deepsleep for a minute, then read the battery voltage and upload that to Thingspeak.

You only need to make a few connections:

First, connect RST with GPIO16 (that is D0 on the Wemos D1 mini). This is needed to let the chip awake from sleep.

Then connect the Vbat through a 100k resistor to A0.

So why a 100 k resistor?

Well the Wemos D1 mini already has an internal voltage divider that connects the A0 pin to the ADC of the ESP8266 chip. This is a 220 k resistor over a 100 k resistor

By adding a 100k , it will in fact be a total resistance of 100k+220k+100k=420k.

So if the Voltage of a fully loaded Cell would be 4.2 Volt, the ADC of the ESP8266 would get 4.2 * 100/420= 1 Volt

1 Volt is the max input to the ADC and will give a Raw reading of 1023.

The True voltage then can be calculated by:

raw = AnalogRead(A0);voltage =raw/1023;

voltage =4.2*voltage;

Ofcourse you could also do that in one step, but I like to keep it easy to follow.

If you do use this possibility, do realise that the resistors drain the battery as well with a constant 10uA (4.2V/420 000ohm). The powerconsumption of an ESP8266 in deepsleep is about 77uA. With the battery monitor this would be 87uA, which is a sizeable increase. A solution could be to close off the Vbat to the A0 with a transistor, controlled from an ESP8266 pin

A program could look like this:

/*

* Wemos battery shield, measure Vbat

* add 100k between Vbat and ADC

* Voltage divider of 100k+220k over 100k

* gives 100/420k

* ergo 4.2V -> 1Volt

* Max input on A0=1Volt ->1023

* 4.2*(Raw/1023)=Vbat

*/

// Connect RST en gpio16 (RST and D0 on Wemos)

#include <ESP8266WiFi.h>

unsigned int raw=0;

float volt=0.0;

// Time to sleep (in seconds):

const int sleepTimeS = 60;

void setup() {

Serial.begin(115200);

Serial.println("ESP8266 in normal mode");

const char* ssid = "YourSSID";

const char* password = "YourPW";

const char* host = "api.thingspeak.com";

const char* writeAPIKey="YourAPIkey";

// put your setup code here, to run once:

pinMode(A0, INPUT);

raw = analogRead(A0);

volt=raw/1023.0;

volt=volt*4.2;

// Connect to WiFi network

WiFi.begin(ssid, password);

while (WiFi.status() != WL_CONNECTED) {

delay(500);

}

String v=String(volt);// change float into string

// make TCP connections

WiFiClient client;

const int httpPort = 80;

if (!client.connect(host, httpPort)) {

return;

}

String url = "/update?key=";

url += writeAPIKey;

url += "&field6=";// I had field 6 still free that's why

url += String(volt);

url += "\r\n";

// Send request to the server

client.print(String("GET ") + url + " HTTP/1.1\r\n" +

"Host: " + host + "\r\n" +

"Connection: close\r\n\r\n");

//Sleep

Serial.println("ESP8266 in sleep mode");

ESP.deepSleep(sleepTimeS * 1000000);

}

void loop() {

//all code is in the Setup

}

The new battery shield

There now is a new version (V1.2.0) of the battery shield that has an inbuilt resistor connecting A0 to the battery, through Jumer J2 (‘Jumper’ being a big word for 2 solderpads), so if you want to measure the battery voltage, all you need to do is to put some solder connecting J2

However, rather than a 100k resistor, a 130k resistor was used. The voltage divider thus becomes 100/(130+220+100), so for a full reading of 1023 (=1Volt on A0) a total of (1/100k)*(130+220+100)=4.5Volt would be necessary.

In reality the Lipo cell will not give off 4.5Volt.

Depending of course on what your project is required to do, my advice would be to put the thing in the deepest possible sleep once it hits the low end of the battery voltage, maybe only to sound a piezo buzzer for say 200 ms when it shortly wakes up. That will be the safest bet to keep your LiPo alive as long as possible. The cell shown probably has a low voltage protection circuit build in though. Still, the warning is helpful.

Sidenote: The other day I accidentally miswired a cell like that (600 mA version), reversed polarity. In a few ms, the magic smoke appeared and the cell seemed to have lost quite a bit of it’s capacity. Hard to believe things going south that fast. Turned out the protection circuit had fried a bit. Without it, the cell runs fine with 550 mAh betweem 3.0 and 4.2 volt.

I agree. It is quite easy to do that. My intention ofcourse is that it will not get too low because of a solar cell that is to provide enough input.

esp.deepsleep is as far as i know the deepest sleep, but when the battery voltage hits 3.0 it might be wise to let the deepsleep last as long as possible and do no uploads anymore.

I am not sure at what voltage the esp stops working, but it does still work at 3Volt, and will proceed to discharge the cell.

After 3.5 days my cell has discharged till 3.7 Volt. Obviously that still can be better, but the battery shield is not the most efficient. I will see how long it will take for the last 0.7Volt. Will repeat it with a bare ESP8266-12

Yes, some components really dont like reversed potential, but seems you still had some luck

Hello friend sketch is giving error in line volt of float = 0,0; help

as you seem to direct this to Jeroen, I am not sure what sketch you refer to?

From “the guy with the Swiss accent” I understood there is a setting that the ADC on the ESP8266 reports Vcc without any external components. Might save two resistors and a few uA. I think the episode where he experimented with a Li-Ion button cell.

that is true, but that reports the Vcc on the board, which under normal circumstances would be 5 Volt, whereas I wanted to measure the direct battery voltage that even when lower, ideally should still give 5 Volts on the board. But I will check his video to make sure. Thanks

Having said that… “the guy with the Swiss accent” is a hero

Are you sure? AFAIK it reports the Vvv on the bare ESP module (so shoud be 3.3-ish). Now I know you want the battery voltage, and after boosting and then dropping to 3.3, all is lost. This is IMHO very usable if you hook up an ESP to a Lipo with just a good LDO. Agreed about “the guy”!!

my mistake. I mean the VCC on the esp indeed.

Needless to state the battery shield is not a good low power option at all.

Hi,

i added the 100k resistor like explained above, but there is some deviation between the readings from the esp8266 and by manually checking the voltage with the multimeter. In my setup a 18650 battery with 3000mAh is used, powering a wemos d1 mini with dht22-shield and battery shield. The 18650 had a voltage of 3.53V when i measured with the multimeter, the reading from A0 was 3.24V. Currently i am charging, at 4.09V (Multimeter) A0 reports 3.88V. So there is some inaccuracy betwen 0.21V and 0.27V. While i could “fix” that via adding an offset, i’m interested in the reason behind this.

could be many reasons. Your multimeter might not be accurate and then again there might be a tolerance in the resistor(s)

What the software and hardware does is based on calculation. The A0 needs 1 Volt. So ideally a 100k resistor on top of the internal voltage divider should give 1 V at A0 when the resistor is fed with 4.2 Volt. However if any of these resistors is not the exact value it is labelled for, the outcome is ofcourse different

I put a 100K from the 3.3v pin to A0 and I am getting within .05v. That can be easily adjusted in the program. There will always be slight variations.

Thanks Mike

Hi,

Could you please tel me what is the name of the connector from the lithium Battery?

Thank you !

The connector on MY lipo battery is the standard lipocell connector. I think it is called a JST-PH. The shield however has a different connector, I think that is a JST-XH

Hi, I see that Wemos has new battery shield V1.2 where is possible to connect resistor 130K to A0 via jumper 2 (J2). How the calculation will be looks like if we will have 1.3V not 1V in this case?

Oh thats cool. Thanks for letting me know.I will check it out

I see it here.

In the diagram of the board wiring, the resistor shown is Brown – Black – Red = 1k, not 100k (Brown – Black – Yellow).

Thanks, that’s why i usually pay more attention to what the text says, but I will see if i can change the picture

Has anyone got any tips for calibrating the ADC values against the battery capacity?

I have a WeMos D1 mini. I’m not convinced its genuine but it seems to work fine. I measured across A0 and GND with two separate multimeters and got a reading of 314K and 316k respectively, so somewhat short of the intended 320k voltage divider (unless there is another path to ground via the ADC itself even when the wemos is powered off in which case that could lower the resistance value, right?). I don’t know how to work out the value for each of R1 and R2 without identifying exactly which tiny SMD resistors they are and trying to scratch away at the lacquer than may be coating the exposed contacts.

With an added 100k resistor between A0 and the battery positive terminal (carbon film, actually reads at 97k on the multimeters) and an 18650 LiPo battery as a power supply for the WeMos, charged to 4.16V I get a reading of 917 on the ADC. This seems quite low, even if I play around with the numbers for R1 and R2 quite significantly.

I just wondered if anyone has a good way to baseline the ADC so that I can make the battery monitor as precise as possible. I’m not looking for exacting +/- 0.01V accuracy or anything, just a way to tune the readings I get to be as accurate as possible.

thanks for reading

There are several ways to do that and I think you may be making it yourself a bit unnecessarily difficult.

For one you could just measure r1 between A0 and ADC and see what r2 gives you by measuring between ADC and mass. R1 should give you an accurate reading and the r2 reading might be influenced by the gate itself, but I presume if you switch off the Wemos it should be pretty accurate.

Another way is to use a known voltage and apply that to the A0. Now the ADC reads a max of 1 Volt, so, if your resistors were correct, applying 3.2Volt, should put 1 Volt on the ADC and your reading should be 1023.

If it isnt, you can calculate the ratio of the resistors, but it would be simpler to just use a correction factor in your software.

As you seem mainly interested in measuring the battery, obviously you would need an extra series resistor and you can play around with that value, or use a correction factor again in your software. Be sure though that you are not measuring more than 1 Volt on your ADC, it will not immediately destroy your Wemos, but it will clip the top value readings.

A reading of 917 is in fact 917/1023 Volt =0.896 Volt =0.9Volt,

Suppose that carbon resistor is indeed 97k, you get two equations:

4.16*r2/(97k+r1+r2)=0.9

and

r1+r2=314

You can calculate r1 and r2 from that by substitution.

4.16*r2/(97+314)=0.9

4.16*r2/411=0.9

r2/411=0.9/4.16

r2=411(0.9/4.16)

r2=89k

r1=314-89=225k

This ofcourse all under the premises that the readings you gave were correct.

I suggest you apply some other voltages and recalculate to see if it gives the same values

But as I suggested earlier you could just measure r1 between A0 and ADC and see what r2 gives you by measuring between ADC and mass

All those findings should be very close

You may make it even easier by just using r1 and r2 and put a voltage of 3Volt on it and see what reading you then get. That way you eliminate the 97k from the equation.

ofcourse you could just use a trimpot for the extra series resistor and trim that till you get the required output on the ADC

Thank you, that is excellent advice and I really appreciate you taking the time to reply. For about 30 minutes I couldn’t work out what you meant by “just measure between A0 and the ADC”, then I realised that the ADC pin of the ESP12-E board that is soldered on top of the WeMos board is available to be probed with my multimeter. Now I feel a little silly. I’ll let you know how I get on with it, as you say I will probably just end up using a correction factor in my software but I wanted to check I wasn’t missing a trick elsewhere

dont feel silly, we have all overlooked things 🙂

Happy i could be of assistence

Sorry, I’m an incredible noob. What is ADC and where I can find the pin to measure it?

Luca, ADC is ‘analog to digital conversion’. Used to measure an analog signal. On the ESP8266 chip itself it is the TOUT pin, but with you being a noob, i presume you have an ESP8266 on a Lolin or Wemos board. There it is the A0 pin. As the chip itself can only take a 1Volt max on its TOUT pin. The board has added a voltage divider, being a 220k over 100k resistor, which allows for a max voltage of 3.2 volt to be measured. So if you want to measure a lipo battery, that has a max of 4.2volt. You will need an extra 100k resistor in series with A0

So I managed to solder a piece of wire to the ADC pin of the ESP-12E chip. It seemed that just touching it with the multimeter lead yielded no reading. I guess there is some sort of flux/lacquer covering the contacts to prevent oxidisation.

I got a reading of 216k between the A0 pin of the WeMos and the ADC pin of the ESP and a reading of 98.0k between the ADC pin and the GND pin of the WeMos. So putting those numbers into the voltage divider calculations (along with the additional 100k resistor that reads as 97k on the multimeter) yields the following:

Vout = (Vin x R2) / (R1 + R2)

Vout = (4.16 x 98) / (98+313) = 0.99V

So the *expected* ADC value given an input of 4.16V should be:

ADC = (Max-mV / Max-ADC-value) * mV-in

(that is the max ADC voltage in mV divided by the maximum ADC reading, multiplied by the input voltage in mV)

ADC = (1000 / 1023) * 990 = 967

This is still a long way above the ADC value of 917 that I get when I construct the circuit and flash the WeMos with my code.

Then last night it dawned on me. All the calculations I had done (and I’ve ended up doing quite a lot of calculations), pointed to a maximum input voltage with my setup of around 4.63V, which equates to a maximum voltage on the ADC pin of 1.1V

This makes sense when I plug any of the ADC readings that I then manually verified with my multimeter:

4.16V @ 917

mV-max = (mV-in * ADC-max) / Actual-ADC-reading

= (4160 * 1023) / 917 = 4640 mV

4.0V @ 883

= (4000 * 1023) / 883 = 4634 mV

3.86V @ 848

= (3860 * 1023) / 848 = 4656 mV

Taking the average of these and plugging it back into the voltage divider calculation yields:

Vout = (4.643 * 98) / (98 + 313) = 1.10V

I’m glad i’ve got to the bottom of the discrepancy. I hope this explanation of my analysis and calculations may prove useful to others.

Does anyone have any thoughts on why my ADC seems to hold 1.1V as it’s reference voltage instead of 1.0?

Well for one thing, your meter might be off 10% 🙂

But do you mean that when you put 1.1V on yr adc you get a reading of 1023 and when you put 1 volt on it you get a lesser reading?

Until your comment, no I hadn’t applied 1.0V directly to the ADC pin; that was worked out by reading ADC values of higher voltages through the voltage divider (after I had measured more precisely the resistor readings of R1 and R2).

Since then I have applied 1.0V directly to the ADC pin and it gives me a reading of 980. When I apply 1.1V the reading goes off the scale, but when I apply 1.077V I get the max reading of 1023.

Ps. Both multimeters agree on the voltage of 1.0V when on the 20V scale, different brands bought at different times. So unless they’re both off by the same amount I have a reasonable confidence in their accuracy 🙂

As Jeroen also remarks: the esp8266 ADC is uncalibrated and what you describe is typical. The ADC can measure ‘1 Volt’ max. It is just that that 1 Volt isnt always precisely 1 Volt. In your case it is 1.077Volt.So that gives you yr calibration factor, that no doubr would be different for another ESP8266

The ESP8266’s ADC is massively uncalibrated. 1024 is “about” 1V, but the calibration is pretty different per chip. A good practice, assuming you really need this, is to store the calibration in EEPROM. It also unfortunately means that you cannot build a big bunch of hardware where you reply on a calibrated ADC. It’s a nasty omission.

Very true Jeroen. Especially as it only has one ADC channel, I use a separate 4channel ADC anyway. Just a simple PCF8591 with 8bit accuracy. For more accuracy an ADS1115

Would a BC457 transistor be fine to switch the voltage monitor on/off? It would have been great if you could provide a scheme for that setting, too.

Thank you for this awesome blog entry!

Yes it would, but depending on circumstances maybe a Logic level FET would be better as that I think has a lower voltage drop than a regular transistor.

But in fact I have used a BC547 to Switch off voltage to Soil-moisture probe (voltage less of an issue there).

You may want to have a look here: https://arduinodiy.wordpress.com/2017/08/19/switching-a-humidity-sensor-off-and-on/

or google for “High side switching”

Obviously you would have to recalibrate the series resistor and formula used for the transfer of Raw analog to Voltage

I used your code, but found a mistake in it.

The right line is:

“client.print(String(“GET “) + url + “&headers=false” + ” HTTP/1.1\r\n” + “Host: ” + host + “\r\n” + “Connection: close\r\n\r\n”);”

I used a Nodemcu v3 Lol1n board, the 3.3 V power supply give 1010 ADC Value for me.

So I must calculate the correct value to measure correct voltage.

For 1010 ADC Value the correct number is 3.34685598.

In code:

pinMode(A0, INPUT);

raw = analogRead(A0);

volt=raw/1024.0;

volt=volt*3.34685598;

So works ok everything!

Thanks for sharing!

Thank you. I didn’t experience any mistake in the code but as WordPress isn’t really ‘ friendly’ in reproducing code it is possible an error was introduced

Here is what I have used and it compiles every time, just trying to get my Thing Speak to record or even show voltage haven’t worked for me yet .Using just the Mini D1 with 100K resistor on A0 battery from resistor to 5vdc .Could use some help here, thanks (newbie). client.print(String(“GET ” + url + ” HTTP/1.1\r\n ” +

“Host: ” + host + “\r\n” +

Maybe part of your question disappeared, but it should be:

client.print(String(“GET “) + url + ” HTTP/1.1\r\n” + “Host: ” + host + “\r\n” + “Connection: close\r\n\r\n”);

and as WordPresssometimes screws up code, here is a screenshot of the section of my working code

Hi there .. great project .. Would you mind explainging how to do this with a ‘barebones’ ESP8266 12F ..AiThinker type ?

You do that in the same way but you need to construct your own voltage divider as the esp8266-12 does not have one. Voltage divider needs to bring 4.2volt down to 1 volt.

Although you could use the wemos shield in this case as well, I wouldn’t but rather get one of those cheap lipo loader modules, forget about generating 5volt like the shield dies but focus on just 3.3volt

The latest official Wemos Battery Shield (v1.2.0) has a jumper connection J2 connecting (+) to A0 via 130kOhm resistor. https://wiki.wemos.cc/products:d1_mini_shields:battery_shield No need to solder the resistor on your own, just shorten J2. 130kOhm is strange, though. The calculation needs to be adjusted.

Thanks, yes indeed it has. The 130k needs 4.5Volt on Vbat, which ofcourse it will never be.I guess the 130k was chosen to be a bit on the safe side

Hi, super stupid newby question. I have looked at the new battery shield schematic and before going into soldering I would like to be sure about J2 jumper: in the back of the battery shield there are 2 metal “pins” labeled as “A0-BAT”… is that what I am supposed to solder? I tried to manually short them out with a cable but I keep reading 65535 in A0. Thanks in advance (and congrats for the great blog)

yes, that is the one: https://wiki.wemos.cc/_media/products:d1_mini_shields:sch_battery_v1.3.0.pdf

AFAIK the resistor on the shield is 130k and the resistor on the wemos is 100k, so you have a 130/100 k voltage divider.

That comes to a factor of 100/230, so lets say your battery is fully loaded to 4.2 Volt is 1.8Volt. Sadly that is still more than the ESP can measure (So I do not really understand the choice for that 130k resister.

Having said that, I don’t think that is why your reading is 65535, that is however a known problem that has popped upon reading the Vcc. Just want to make sure you have your Wemos set up to have A0 read EXTERNAL voltage.

Your max reading should be 1023 and a simple analogRead(A0);should be OK

Thank you for your kind words

Thanks a lot for your answer.

Yes. Battery Shield v1.3.0 is the one I am using.

Super simple setup: wemos d1 mini on top of the battery shield, which in turn is connected to a 3.7V 650mAh LiPo (multimeter read when fully loaded = 4.12V).

No additional connections in place.

The code to read A0 is the one suggested in your original post: pinMode(A0, INPUT); raw = analogRead(A0);

I will solder J2 and let you know if that modifies my current 65535 A0 reading.

Thanks!

Great

Hi, I’m facing the same issue as Cesar. All my readings returns 65535.I’m using the 1.2.0 version of the board:

did anyone of you faced the same issue and found a fix ? thanks in advance,

Laurent

There was someone in the comments who experience that. My suggestion in his case was to make sure he had the wemos set up for measurement of external voltage. I am not sure what version you have but he said he was going to try soldering J2. I did not hear back from him so one of those things might have solved it

thanks for the reply.

I tried to insert the picture of the board I have, but seems that it failed. here is the link to it. (v1.2.0)https://www.az-delivery.de/cdn/shop/products/batterie-shield-fur-lithium-batterien-fur-d1-mini-869275.jpg?v=1679398358&width=1100

the person you mention wrote that in the comment just above mine 4 years ago, I was hopping he read an reply to my message:) That said, I had those lines in my code (before adding the battery shield) :ADC_MODE(ADC_VCC);ESP.getVcc()

I removed that part of the code and I now have a more normal reading on A0 with J2 soldered. with J2 open, reading is 0.

so thanks for your great blog, it helped me a lot 🙂

cheers,Laurent

Thank you for yr kind words. Glad i could help. The line you removed indeed measures the Vcc on the ESP. I will check that link. I only have the old/first version of the shield. I would like to be able to see/list the differences in an update to this post

Hi, Thanks for this article. I’m doing some tests with a 1.2.0 battery shield for the wemos and I’m having some lights and shadows… I think the best way to do a calibration is to directly measure the voltage with a multimeter, and then the reading, and just map both values.

however, my problem is that I’m getting very disparate readings, so if I do three analogRead(A0) with 20ms of separation, I’m getting values that are different by more than 40%, say 3.2, 2.4, 2.6 for example. I’ve implemented a full voltage divider with two resistors, 220k and 680k, that would give 0,904v for a 3.7v input. anyway my problem is not the precision but the lack of consistency on readings.

someone has suggested to add a high pass-filter, but I find this is overkill and probably I’m doing something wrong… any hints?

I agree, the readings on the esp8266 or the wemos for that matter seem to be off though a variation as big as you describe i have not seen. There are two possible sources for the problem: either your battery varies (something that can be hard to see on a dmm) or in variation in the esp readings itself. The latter is hard to fix but the former maybe remedied by a small elco over the battery or at A0

Definitely it is the battery. When measuring voltages while connected to usb power it gets a steady value. thanks

Try new battery or put a 100uF cap over it

I’m reading in a 27.4V batt level (Li-ion 7S) anyhow I added a 3.3M Ohm resistor to Ao etc. It’s working, but the input is quite rough…. I’m dumping into an SQL DB and using grafana to plot etc… it’s got quite a lot of variation in it… ~ 0.5v. even with 1023 A to D it should be better than this? ~30 digits per volt. so it’s moving 15 units? is that normal?

Brendan, your divider is 3,620/100. Your 27.4 Volt then goes to 0.76 Volt if I am correct

a variation of 0.5 Volt (on the 27.4 I presume) would be 0.0138 (13.8mV) volt on your ADC.

As your ADC is 1023 per volt that is in fact 0.977 mV per ‘point’ so 13.8 mV (or 0.5 Volt predivider) indeed is some 14 units. That seems quite a lot indeed.

I know the ADC of the ESP8266 is not extremely on point, but 14 units off seems like a lot.

I am wondering if you can see a certain trend through time. I can imagine the resistors warming up. but even with 27.4 volt over 3.620 MOhm that is only 27.4^2 / 3,620,000 = 0.2mWatt that should not make a difference.

I am sure you wondered this yrself….is yr 27.4 Volt stable?

Yes, I believe the voltage is stable. I personally think it’s down to the resistance changing. In order to experement a bit I installed a connector (a 2 slot female breadbord connector, the same as the once used to connect the boards together etc) to the prototype board, the resistor is just them bent and inserted into the slot, that way if I want to monitor different voltages I just swap them out. However I suspect at 3.3M Ohm any movement or even temp variation on that connection could make a big difference. I’ll try to tidy that up and see what happens. I also wonder about the USB power as well. I’ve used some power bricks in the past that make readings worse. I have some magnetometers that don’t like dirty power input, so I’ll swap that out as well.

Well i think you are right and i was going down that road a bit as well, eventhough warming up of the capacitor due to dissipated heat might be minimal. The way you describe yr resistor connection could certainly play a part.

As long as the usb gives a voltage well above 3.3 plus the voltage drop of the regulator it should t have a big influence as well.

What may play a part is actually the ADC itself: i am storing the Vcc of an esp in a database. I measure it directly/internally and i see that that slightly varies as well while it should not. Such a slight variation on the 3v3 level ofcourse would calculate as a bigger variation coming from 27 volt.

Tbh i never really researched into the adc accuracy of the esp but i hear it isnt perfect

Hi, Is there any way I can control the charging of a battery via ESP8266 using this board???

Yes, but you would need various sensors and other peripherals.

Probably simpler and cheaper to get a dedicated module. For e.g. LiPo’s those are fairly cheap

Thank you for your reply, can you suggest me any board or any idea that I can use to control the charging of a battery via ESP8266. (Thanks in advance)

I have no knowledge of such a board but a simple tp4056 module will do it without esp

Thank you for your reply, can you suggest me any board or any idea that I can use to control the charging of a battery via ESP8266. (Thanks in advance)

See my reply under yr other question

Hi E, Thanks for the info. I’m confused about it though. I have a Wemos D1 Mini Pro and Battery Shield. Your picture displays the battery shield I’m using (the old one without J2). The A0 pin on the Wemos is being used for a light sensor. The A0 on the battery shield is free. Your tutorial is about modifying the Wemos module but the photo shows the battery shield. How do I monitor voltage on the battery shield A0 through the Wemos D1 Mini Pro in the way you’ve mentioned?

Hi Jason, indeed you do seem confused. I am not modifying any shield, just using a resistor to connect 2 things.

Also, A0 on the shield is the same A0 on the wemos, it is not an extra A0 just as all the other pins are not ‘extra’. The moment you insert the shield they all connect to their name sake. Thus if you are using A0 for a sensor, you cannot use it to measure voltage.

I hope that clears things up but if you have additional queries do not hesitate to ask

wow, great to see so many comments over such a long time, shows lots of interest.

I have a semi related question. So I don;t care too much about measuring my battery, it basically has to last 10 hours, so I think a 2000maH should be fine.

My question is, if I hook this battery up to the Wemos lipo shield. (and I need to get a connector convertor.

I had thought to build a physical switch into my case. This would break the positive connection on the lipo, so would turn the wemos d1 mini off.

I realise I would have to switch it back on to charge, but is there any other danger/issues with this?

Let me just see if i understand you right: you want to put a switch in between the positive lead of your lipo and the battery shield right?

I see no danger or other issue with that other than what you already mentioned.

If i misunderstood your question please do not hesitate to ask again

Exactly that. My Arduino project is not a long-term monitoring thing, but is part of a commentator info system for races. I want everything in a box including battery, and I don’t want to have to keep unscrewing it, so I thought just putting in a switch will mean the battery can be switched off. Not sure if it is better to switch negative or positive. I will have to do some googling on that!

Thanks

D

Usually the positive is switched.

Good luck w yr project

thanks for your help .Could you tell me what would be the value of the resistance for a power supply of 8.4 v? I get 520 Mohm, but I’m not sure. thank you.

Well if you want to measure 8.4 volt. It is as follows: you want 1 volt over that internal 100k resistor, so you need to have 740 k on the top side of your voltage divider. You already have 220 k, so you need to add 520 kiloohm, NOT 520 megaohm.

many thanks for your reply. The increase of current will be for about 10 uA as you say with 4,2v? and and it would not be necessary to put a transistor?

Measuring the battery voltage will take current. When the voltage divider is adapted for 8.4 volt, it still will be 10uA. Whether it is ‘necessary’ to use a transistor to cut the voltage when you are not measuring is a matter of choice and circumstance: what is the max charge of the battery, is it regularly replenished from e.g. a solarcell? Is it going into deep sleep? etc.

But ofcourse a transistor/FET can be used to switch off the voltage divider. This must be a highside switch, otherwise you may blow up your ESP. But if you are not putting your ESP to sleep, one can wonder if switching off the voltage divider makes a substantial difference. But if you do bring the ESP into deep sleep, make sure your pin controlling the transistor, keeps the desired state when the ESP goes to sleep. Also, for a highside switch, a PNP transistor or pFET might be the better choice, but that will be hard ro control with a 3v3 pin, sobyou may have to bring in a few more components……who all will cause a current drain of their own

If you want to add a transistor to cut off current to your voltage divider, perhaps this is a solution https://hackaday.io/project/20750-homefixer-esp8266-devboard/log/72236-how-to-measure-the-battery